Warm Take: same as it ever was

Yesterday's beautiful snowy weather would have been an occasion to dig into an Icelandic thriller. Instead, I was in front of the fireplace, cocoa in hand, reading On the Dangers of Stochastic Parrots: Can Language Models Be Too Big? 🦜

Two smart programmer friends recently initiated (separate) conversations about Chat-GPT and GPT-3. I came to each conversation with resistance. In all of the everything that was 2022, and then the holidays, I had intentionally paid no mind to this emerging tool.

After hearing their points about when the tools had been useful or time-saving, headdesk, I decided it was time to pay attention.

In my layperson's naive understanding, companies behind these tools have essentially created technology that has "slurped up" more of the internet than any of us can read. Using all of the (English) language the tech has ingested, the companies have created predictive models that enable the technology to produce strings of text in response to queries.

I know there are readers out there with real AI expertise. Please do school me!

However, the layperson's perspective is all I care about right now. 10 years ago, that first-time manager on your team may have looked to YouTube for instruction on, say, how to fire someone. Tomorrow, or maybe today, they'll ask Chat-GPT.

Laypeople will operationalize this technology.

Kirsten Lambertsen is a product innovation consultant, and founder of PatronHunt.com, the fastest growing index of independent creators online. She's also one of the friends who drew me into this conversation.

I told Kirsten about my concerns about how emerging managers might use this techology, say, to find out how to give feedback.

Kirsten suggested a query for GPT-3: "Act as if you are a management coach. Give me ideas on how to phrase negative feedback constructively for a someone who reports to me. My first question is, 'How do I tell someone that they need to improve their personal hygiene?'"

GPT returned:

- “I understand that personal hygiene is important and I want to make sure you are aware of how it affects your performance. How can I help you to improve your personal hygiene?”

- “I understand that personal hygiene is important and I’m concerned that it might be affecting your performance. What steps can you take to improve your personal hygiene?”

- “I’ve noticed that your personal hygiene could be improved. What do you think are the best ways to make sure you’re taking care of yourself in this area?”

- “I’m here to help you make sure that your personal hygiene is up to par. Can you tell me what steps you think will help you improve in this area?”

Um, no. But the GPT-3 game is to ask the right question. We wound up asking a series of increasingly refined questions.

When Kirsten's query was, "Can you put it a little more delicately," GPT-3 returned 4 responses. The best (imo) of which was, “I’m sure you understand the importance of personal hygiene, but I’m wondering if there’s anything I can do to help you make sure you’re taking care of yourself in this area.”

We upped the query; you had been managing people for 25 years. GPT-3 returned 5 very similar responses, using more complex language, lol.

Our final salvo on personal hygiene: what if you were a feminist who had been managing people for 25 years who wanted to give compassionate feedback to a team member?

This time, GPT-3 returned only one response: “I understand that personal hygiene can be a sensitive topic. I want to make sure that you feel comfortable and taken care of in the workplace. Can we talk about how we can make sure that you maintain a high level of hygiene while still feeling respected and valued in the workplace?”

As a first draft, the final response – which required a query from two feminist managers with a 40+ years of combined experience – at least nods to the values of treating a worker with dignity and respect.

The problem is, of course, that GPT-3 is not a You. The technology produces strings of text that will be met by humans, and will require human understanding and experience to be rendered useful.

GPT-3 doesn't know what personal hygiene is. It doesn't "know" anything, including the components of meaningful feedback. It doesn't "understand" the subtle ways in which body odor – or, visibly dirty clothing, bad breath, lingering cigarette smoke – might limit a team member's advancement. It has no context other than the language in the model; it does not account for any possible sources of a worker's perceived hygienic lapse.

Humans make sense, the technology does not; it is not competent.

When called on to address someone's hygiene at work for the first time – or even the second or fifth time – get some help from your manager, HR, or someone in your trusted circle. This is not work to do alone.

I also found a story on the internet about someone who had been fired after making an issue of a co-worker's (supposed) body odor. After being fired, they sued their employer. The court dismissed the case, commenting that the co-worker did not have body odor, and noted the fired worker's complaint had been a form of bullying.

Finally, for today, these tools are "trained" on a largely undocumented dataset that was "slurped up" from the Internet.

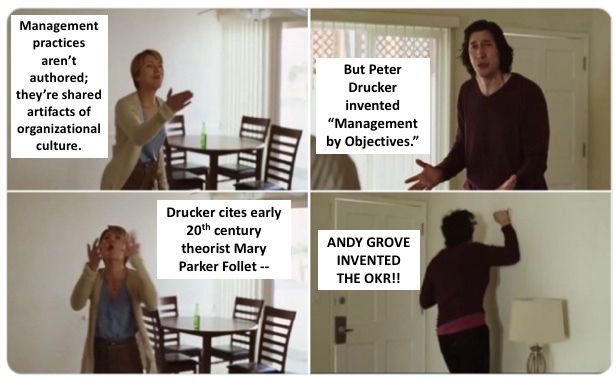

I asked Kirsten to make one more query of GPT-3, "Can you give me a history of the OKR?"

"The OKR (Objectives and Key Results) system was developed in the late 1970s by Intel’s CEO, Andy Grove. The system was created in response to a need for a better way to measure and communicate progress and goals throughout the organization. The idea behind OKRs is to set clear, measurable objectives and then track progress against those objectives. The system was quickly adopted by other companies, such as Google, and has become a popular performance management system in the modern business world. The OKR system is based on four key principles: clarity, alignment, focus, and commitment. These principles are used to ensure that objectives are set, tracked, and achieved. The OKR system is used to help organizations reach their goals, improve performance, and increase engagement."

Basically, GPT-3 serves back what it knows about OKRs largely from Reddit, Wikipedia, and "a collection of books." This echo chamber has a lot of questionable information.

In this case, it eliminates the contribution of every known and unknown manager or academic whose work is contained in the notion of an OKR. Including at least one woman of note, Mary Parker Follet.

Andy Grove did not "invent" the OKR. In 2018, I did a close read of Grove's High Output Management. I also read Andy's autobiography, and my guess is that Andy did not consider himself to have invented this management practice, lol. Sadly, he died in 2016, so I can't ask him.

My commentary on High Output Management was originally for supporters, and I've unpaywalled this group of posts.

(pp. 1-50.) (pp. 51-100.) (pp.101-150) (pp. 150-200-ish) (pp. 200ish-conclusion)

Speaking of the history of women in business, CV Harquail's new course, Feminist Business History and Heritage: Learning from our Foremothers will kick off on February 7.

CV is the author of Feminism: A Key Idea for Business and Society (Bookstore.org) (library), which we talked about in Are norms really normal? On Management #39

It will not go without saying that Timnit Gebreu has been an scholar and critic of the invisible algorithms that might be used to send armies of police officers into non-white communities. Or that people on your team may be in active mourning about the loss of Tyre Nichols. May you have the privilege to enable space and time they may need.

This is getting long, and it's after noon on Sunday. I try to write these Warm Takes quickly on Sunday morning, but this one took a bit of preliminary work, lol. As per the norm with Warm Takes, there may be typos and inelegant turns of phrase, which I will probably fix later on, on the Internet.

Thanks to Kirsten, for being game to share her GPT-3 experience with me, and let me quote her, and for introducing me to the notion of "slurping" data. Thanks to Luke, whom I didn't take time to ask for his permission to quote, cite, or name. So I didn't. That said, our conversation prepared me to listen!

Thank you, for reading and supporting my newsletter.

May you, your loved ones, and all of our communities, be safe, healthy and free.

Showing my work

- On the Dangers of Stochastic Parrots: Can Language Models Be Too Big? 🦜 Emily M. Bender, Timnit Gebru, Angelina McMillan-Major, Shmargaret Shmitchell. FAccT '21: Proceedings of the 2021 ACM Conference on Fairness, Accountability, and Transparency, March 2021 Pages 610–623. This is the paper that got Timnit Gebreu fired from Twitter.

- On the dangers of stochastic parrots: Can language models be too big? 🦜 Seminar, The Alan Turing Institute, July 13 2021, Emily M. Bender, with panellists Dr Anjali Mazumder, Dr Zachary Kenton and Professor Ann Copestake. Host: Dr Adrian Weller. (I read the full article, above, first. This video presentation covers the paper's high points, adding additional commentary, including brief and relevant discussion about human-machine interaction. Lol if I had known about the video I might have taken it in first, with my cocoa.)

- We read the paper that forced Timnit Gebru out of Google. Here’s what it says, Karen Hao, MIT Technology Review, December 4, 2020.

- Video intro to Datasheets for Datasets, By Timnit Gebru, Jamie Morgenstern, Briana Vecchione, Jennifer Wortman Vaughan, Hanna Wallach, Hal Daumé III, Kate Crawford, Communications of the ACM, December 2021, Vol. 64 No. 12, Pages 86-92, 10.1145/3458723

- All-knowing machines are a fantasy: Beware the human-sounding ChatGPT, Emily M. Bender and Chirag Shah, at iai News, the Institute of Art and Ideas, 13th December 2022.

- Google Paper Cites Research at Center of Its Staff Firestorm, Dina Bass, Bloomberg, April 5 2022.

- How To Stop Artificial Intelligence From Marginalizing Communities? Timnit Gebru, TEDxCollegePark, June 21, 2018.

- Employee files ‘chronic body odor’ lawsuit, Indianapolis Star, via Detroit News, January 2, 2018.

- Lawsuit: Fired For Hanging Air Fresheners Because of Smelly Co-Worker, Suzanne Lucas, Inc magazine.

- Bridges v. City of Indianapolis

Full title:AMBER BRIDGES, Plaintiff, v. CITY OF INDIANAPOLIS, Defendant.

Court:UNITED STATES DISTRICT COURT SOUTHERN DISTRICT OF INDIANA INDIANAPOLIS DIVISION

Date published: Jul 11, 2019 Bridges v. City of Indianapolis, No. 1:17-cv-04706-DML-WCG (S.D. Ind. Jul. 11, 2019)

Imo this case is worth reading for what can happen – by my inference – when bad behavior is allowed to fester at work. - Competence: On Management #48.

- Warm Take: Feedback.

- And whoa, I just found this article as I was about to hit "send," will read. Mary Parker Follett as Integrative Public Philosopher, Matthew J. Brown, Cambridge University Press: 19 April 2021.